High Data Quality Requirements for SAP BW Systems

SAP BW systems collect data from different source systems. These are then transformed, integrated and aggregated in order to make the data available to the receiving systems. These can be reporting tools or analytical systems, for example. Since this data is often used for decision-making, ensuring data quality in SAP BW is indispensable due to the multi-stage data processing process.

Challenges of Ensuring Data Quality in the SAP BW System

From our many years of experience with our clients, the core challenges for quality assurance of the data in an SAP BW system lie in the following points:

- Data errors lead to wrong decisions and result in loss of reputation. This is why technical departments quickly lose confidence in the reporting system.

- Data error corrections in an SAP BW system are generally very costly and time-consuming

- In order not to detect data errors only in the report, quality checks are implemented in the data flow at various levels. As a rule, this is done by means of corresponding ABAP programming, directly in the SAP BW system in the transformation routines by the IT department.

- Large amounts of data in an SAP BW system require a very high number of quality-assuring individual plausibility checks.

- Creation, development and maintenance of these programmes mean a high effort for IT

- Technical departments are (thus) dependent on the capacities of IT

- Creation and maintenance of quality checks often takes (much) too long

To solve these challenges, technical departments would have to be enabled to do the following things themselves without IT know-how: 1. Create and maintain quality checks independently in self-service (i.e. without programming). And 2. in the event of an error, be able to define for themselves whether processing in the SAP BW system should be continued or terminated.

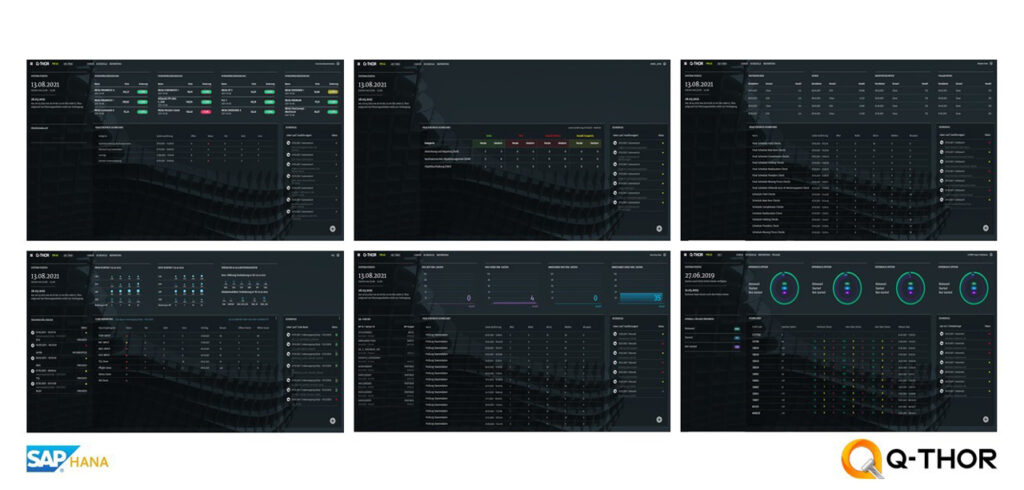

Q-THOR: Self-Service Data Quality Assurance in SAP BW

The product Q-THOR was developed from the idea of providing technical departments with a solution for independently creating quality checks in self-service and scheduling their execution. With Q-THOR, both the quality of the data in the SAP BW system can be ensured and the processing of the data can be controlled:

All images on this page © 2022. BIG.Cube GmbH. All rights reserved.

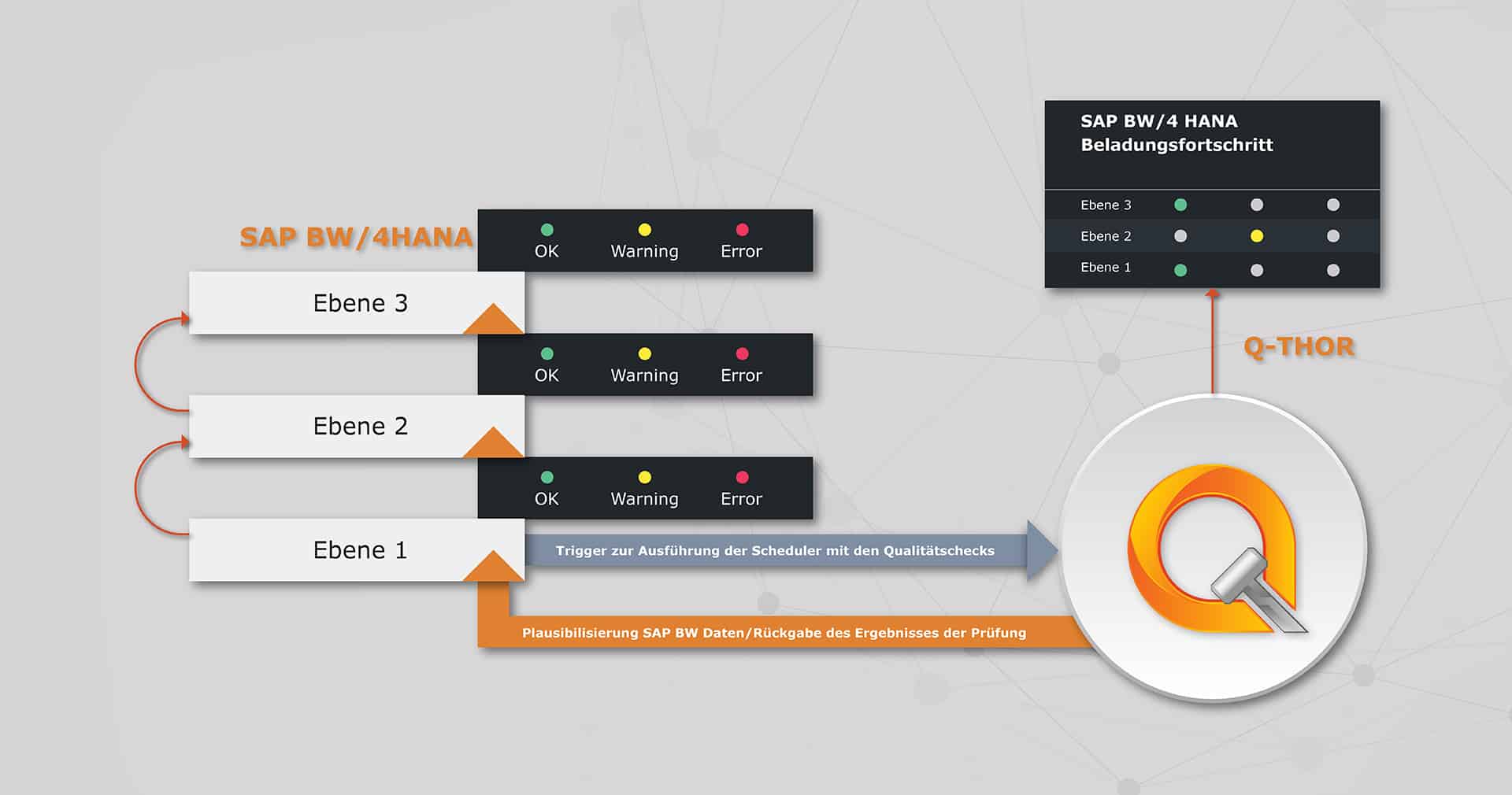

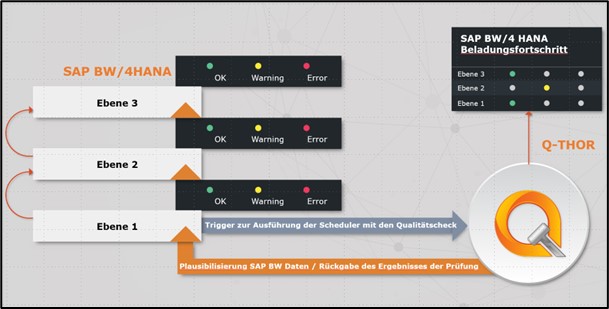

The Interaction between Q-THOR and SAP BW

To check data in SAP BW with Q-THOR, SAP BW is configured directly in Q-THOR as a data source. This allows users to select the data to be checked from the SAP BW system in the check system and define the plausibility checks themselves. The next step is to schedule the execution of these quailty checks in a scheduler.

In SAP BW, it is possible to trigger a scheduler in Q-THOR to execute the quality checks before each data processing step. This is done via a separate Q-THOR process directly in SAP BW. The scheduler then performs the quality checks directly on the SAP BW data. A corresponding return code is returned to the SAP BW system via the KPI definition defined in Q-THOR during the check creation. This return code is evaluated by the SAP BW system and further processing is controlled according to the result. In Q-THOR, the current status per processing level in SAP BW and the loading progress of the data is monitored in a dashboard.

Q-THOR does not change any standard processes in SAP BW. It is rather a tool to be able to monitor the data quality of data outside of SAP BW with the specially defined quality standards. In addition, any number of quality gates can also take place before loading into SAP BW. This can be done, for example, to check source data in the source systems where it was first created. With Q-THOR, technical departments are enabled to graphically monitor the entire data flow from source to SAP BW backend to reporting at a central location. In this way, data errors can be detected and corrected at an early stage.

Conclusion

By using Q-THOR to check the plausibility of data in a SAP BW system, the costly conversions and maintenance of ABAP programmes for IT departments can be eliminated. All quality checks of data can be maintained independently by technical departments and business users and their results can be checked. Since these users are usually the data owners anyway, exactly the right people are equipped with a very powerful tool to quickly and efficiently create their checks and adjust them if necessary.

Written by Tom Schütz

Share Post

More Exciting Topics from our Newsroom

Shortcuts for SAP BW in Eclipse

This blog post explains five simple shortcuts to make working...

Read MoreSAP HANA Transport with MS Azure Pipelines

This blog post introduces the transport tool MS Azure Pipelines...

Read MoreFactors of Success for a SAP BW/4HANA Migration

This blog post shows which Factors of Success exist for...

Read MoreReview of the DSAG Annual Congress 2022

These trends became clear at the DSAG Annual Congress 2022:

- SAP On-Premise still No. 1: The importance of SAP On-Premise solutions will continue to decline, but still remain at a high level

- As Cloud Solutions are becoming more important at the same time, a trend can be seen: the future is hybrid

- In addition to the technical challenges, many companies are facing changes brought about by digitalisation, transformation and the upheaval of the modern age

- In the area of SAP Analytics, the DSAG Annual Congress showed that SAP provides an automated conversion tool for the transition from SAP BW to the SAP Data Warehouse Cloud with the SAP BW Bridge

- In practice, however, many companies are initially faced with a migration to SAP BW on HANA or BW/4HANA

- The topic of sustainability was also addressed in various facets at the DSAG Annual Congress. From the implementation of individual measures, e.g. at Deutsche Post, to meaningful ESG Reporting

We are already looking forward to the DSAG Annual Congress 2023!

Impressions from Leipzig

Credit: © BIG.Cube

Share Post

More Exciting Topics from our Newsroom

Shortcuts for SAP BW in Eclipse

This blog post explains five simple shortcuts to make working with the Eclipse tool easier.

Read MoreFactors of Success for a SAP BW/4HANA Migration

This blog post shows which Factors of Success exist for an SAP BW/4HANA migration.

Read MoreReview of the DSAG Partner Day SAP BW/4HANA, Analytics and Hybrid Landscapes in Presence

These topics were discussed at the DSAG Partner Day around SAP BW/4, analytics and hybrid...

Read MoreQ-THOR – Certified by SAP

Q-THOR went through the following certification process

The following processing steps, among others, were requested and examined by SAP during the Q-THOR certification process:

- Validation of the SAP HANA integrated architecture

- Provision of the Q-THOR functional specification

- Examination of all test cases

- Presentation of the data security concept

- Installation of the software Q-THOR on a HANA system using an installation guide

- Performance of various use cases in Q-THOR and validation of the core functions

Benefits of certification for (future) users

Because of Q-THORs generic architecture, it also runs on non-SAP landscapes

You want to read more about Q-THOR?

Share post

More exciting topics from our newsroom

Shortcuts for SAP BW in Eclipse

This blog post explains five simple shortcuts to make working...

Read moreData Quality in SAP BW: Checking Data for Plausibility without Programming

Our standard software Q-THOR can easily improve data quality in...

Read moreSuccess story: Data Quality Management at MEAG with the Standard Software Q-THOR

Learn how MEAG has been ensuring successful data quality management...

Read moreSuccess-Story:

Data Quality Management at MEAG with the Standard Software Q-THOR

Improve data quality and correct defects as quickly as possible

Systematic data quality management (DQM) came into the world of MEAG (the asset manager of Munich Re and ERGO) in 2016 with a prototype. The goal was to develop a system that performed fully automated quality checks as soon as data was entered and thereby noticeable improved the quality of data. The motto was thus: Correct defects as quickly as possible.

More than 200 MEAG users in various systems

Since 2016 several more IT systems have been linked up, including parts of operating systems and the risk management system. By 2020 more than 100 MEAG users were actively using the DQM system. Further operating systems were linked up in the course of subsequent projects.

In this use case, for example, there are therefore now 750 daily automated triggers of plausibility checks which are performed on up to 10 GB of data per day for almost 100 users at several locations and in several teams.

DQM as a Key Component of Digitalisation

Thanks to DQM, additional risks are identified and damage is avoided. The identification and handling of inaccurate data is also significantly more efficient. ‘DQM is a key component of digitalisation in the property sector and sustainably improves data quality,’ says Siegfried Korb, Head of Property Management Germany at MEAG MUNICH ERGO Assetmanagement GmbH.

Besides the plausibility checks, the existing approval tool has also been replaced by fully integrated automated plausibility checking in DQM.

Q-THOR’s Track Record

As a result, the DQM system has an excellent track record: Several hundred active users in a two-figure number of teams and almost 2,000 transactional plausibility checks performed per day. The next milestone is expansion to incorporate the issue of IFRS 9. The group will use the DQM system for data supply and plausibility checking and the number of users will more than double.

Using the standard software Q-THOR from BIG.Cube GmbH, MEAG is therefore able to guarantee its data quality in DQM, among other things.

Share post

More exciting topics from our newsroom

We are one of “Germany’s best employers”

As one of Germany's top 100 employers with renewed 'Great...

Read moreShortcuts for SAP BW in Eclipse

This blog post explains five simple shortcuts to make working...

Read moreBavarian Curling with the BIG.Cube

BIG.Cube employees went bavarian curling together after work. Find out...

Read moreA look back at the Deutsche Kongress Master Data Forum 2021

At the Deutsche Kongress Master Data Forum in Düsseldorf the focus was primarily on the topics of Digital Transformation, Master Data and Data Governance. The Communication therefore provided interesting insights into matters such as the current status of data governance projects at the companies taking part, which came from various industries. In a number of exciting workshops and round tables, talks were given and discussions were held on topics ranging from trends in master data management and data quality as a success factor to the successful implementation of a data governance structure.

At the Master Data Forum, the following trends became clear:

- The goal is data-driven: Companies are collecting more and more data. Technology is continuously adapting to this and the need for information is growing. In order for the data to yield usable information, the quality of the data must be of a certain standard

- The motivationbehind the data quality strategy often comes from the specialist divisons: The question is not whether the topic of data quality is addressed within companies, but how it is addressed

- The driving forces of data strategy are among others mssiing revenue as a result of incorrect data, risking a bad image and processes within the company that are requiring improvement

- Change management is gaining in importance in large projects

- Companies want to make greater use of the data available to them and be guided by the data. The necessary infrastructures, processes and methods of working must be geared towards this

- Data management and data quality are therefore directly linked to corporate success

We’re already looking forward to the Master Data Forum 2022!

Impressions from Düsseldorf

Credit: © BIG.Cube

Share Post

More Exciting Topics from our Newsroom

We are one of “Germany’s best employers”

As one of Germany's top 100 employers with renewed 'Great...

Read MoreShortcuts for SAP BW in Eclipse

This blog post explains five simple shortcuts to make working...

Read MoreBavarian Curling with the BIG.Cube

BIG.Cube employees went bavarian curling together after work. Find out...

Read MoreData migration:

Ensure data quality with Q-THOR

From tedious delays and budget overruns

The shoe pinches in numerous places during data migration. Unfortunately, one of the most painful points often lies in the quality of the data being migrated. Essentially, the problem is quite simple: the quality of the data being migrated must be ‘correct’. First of all, this is because inadequate data quality leads to new plausibility checks and data cleansing. In the long run, this in turn leads to tedious delays and unplanned budget overruns.

Key challenges of data migration

Experience has shown that in almost all of the data migration projects carried out, the main challenges are:

- reliable statements regarding the structure of data and content

- increasing expenditure for IT and specialist divisions on the implementation of new plausibility checks

- efficient, prompt performance of data cleansing

Data migration is not just time-consuming; it is also cost-intensive. It is therefore vital to take the key challenges into account right at the beginning in the project and budget planning stage. This does not mean that budget and time planning in migration projects must be increased by dizzying amounts, however.

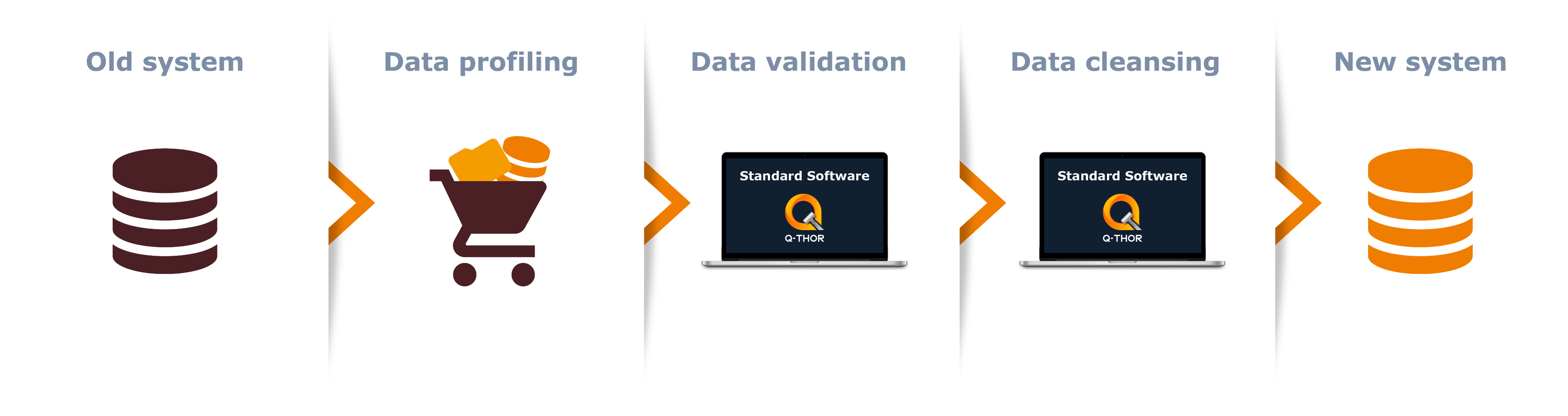

Data quality in migration projects

When migrating old data to a new system, it is often assumed that the data will already have the expected content and be in the right format as expected for the new system. Unfortunately, however, that is not usually the case, which results in a lot of manual work. A standard software can greatly reduce the time and budget involved here. This is because, on one hand, it ensures that the data being migrated is reliable while, on the other hand, it also automates data cleansing. The figure below illustrates a potential scenario, the example here being the standard software Q-THOR:

One initial milestone is the performance of data profiling analyses on the pool of data being migrated. Based on these results, the necessary data plausibility checks can then be applied and performed. Finally, the data defects can be examined and cleansing orders for this data can be generated.

Formula for successful data migration: Expert knowledge + standard software

Anyone who has ever worked in data migration can confirm: Expert knowledge of data analysis is vital. Another success factor is the use of a standard software. More and more project managers are discovering this fact. This is because a combination of expert knowledge and standard software enables migration projects to be planned more reliably and carried out more successfully. Furthermore, the standard software quality checks defined for the migration project can be reused later during the productive operation of the new system. The software Q-THOR is a piece of standard software that has already proven itself in migration projects among other things and demonstrated these exact benefits.

Written by Tom Schütz

Share post

More exciting topics from our newsroom

We are one of “Germany’s best employers”

As one of Germany's top 100 employers with renewed 'Great...

Mehr lesenShortcuts for SAP BW in Eclipse

This blog post explains five simple shortcuts to make working...

Mehr lesenBavarian Curling with the BIG.Cube

BIG.Cube employees went bavarian curling together after work. Find out...

Mehr lesenA look back at the 4th Master Data Management Annual Forum

Data quality as one of the key topics

Data-driven as the new goal

Impressions from Vienna

Credits: © imh

Share post

More exciting topics from our newsroom

We are one of “Germany’s best employers”

As one of Germany's top 100 employers with renewed 'Great...

Read moreShortcuts for SAP BW in Eclipse

This blog post explains five simple shortcuts to make working...

Read moreBavarian Curling with the BIG.Cube

BIG.Cube employees went bavarian curling together after work. Find out...

Read moreQ-THOR on 23 & 24 November. at the Master Data Forum in Düsseldorf

As standard software for data quality, Q-THOR is sponsor of of the Deutsche Kongress Master Data Forumwhich will be held on 23rd and 24th November 2021 in Düsseldorf. Q-THOR will be showcased at 3:00pm on 23 November in the course of the workshop ‘Data Quality as a Success Factor in Data-Driven Management’. The focus will be on the following 3 topics

- How transparent is the data quality in your company?

- How can the data quality layer be integrated into a company’s own data architecture quickly and easily?

- How does the standard software ‘Q-THOR’ guarantee data quality in real time?

Would you like to appoint a face-to-face meeting at the event?

Share Post

More Exciting Topics from our Newsroom

We are one of “Germany’s best employers”

As one of Germany's top 100 employers with renewed 'Great...

Read MoreShortcuts for SAP BW in Eclipse

This blog post explains five simple shortcuts to make working...

Read MoreBavarian Curling with the BIG.Cube

BIG.Cube employees went bavarian curling together after work. Find out...

Read MoreQ-THOR — A secure investment in an innovative software

Promoting research and development

The Federal Ministry provides support for research and innovation projects through topic-specific and non-specific funding programmes. The wide range of funding is geared on one hand towards key areas of innovation and technology and on the other hand towards a variety of challenges and starting points too.

The funding is based on several aspects. In principle, the following areas are analysed:

Degree of innovation

How innovative is a project from a scientific or technical perspective?

Application

What is the prospect of success? Is there a utilisation concept?

Avoidance of double funding

Has funding previously been granted for the idea behind the project, or will it be?

You can find extensive information regarding this topic on the homepage of the Federal Ministry of Education and Research.

Q-THOR – A product with innovative drive

Q-THOR successfully passed the assessment process for prospective funding by the Federal Ministry of Education and Research. The following aspects in particular led to the Ministry’s positive decision:

- the innovative and generic architecture

- the wide range of functions

- the intuitive self-service use

- the highly efficient and cost-cutting process of validating company data from any source in real time

- the simple, customised integration of the product into companies’ system and data landscapes, regardless of the industry

You want to read more about Q-THOR?

Share post

More exciting topics from our newsroom

We are one of “Germany’s best employers”

As one of Germany's top 100 employers with renewed 'Great...

Read moreShortcuts for SAP BW in Eclipse

This blog post explains five simple shortcuts to make working...

Read moreBavarian Curling with the BIG.Cube

BIG.Cube employees went bavarian curling together after work. Find out...

Read more